Have you ever wondered how tech giants manage to handle enormous amounts of data while maintaining high performance? Google seems to have found a new solution. Discover how an innovation could transform the way data is processed and stored globally.

The 3 must-know facts

- TurboQuant is a compression algorithm developed by Google Research to optimize the deployment of AI models.

- This technology could reduce the required memory size by a factor of six and improve the performance of large language models.

- The algorithm is still in the research phase, with additional details expected at the ICLR 2026 conference.

An innovative compression algorithm

TurboQuant, Google’s compression algorithm, was designed to lighten the computing resources required by artificial intelligence models. By reducing memory needs by a factor of six, this algorithm could prove crucial for large-scale applications, such as Gemini’s AI models.

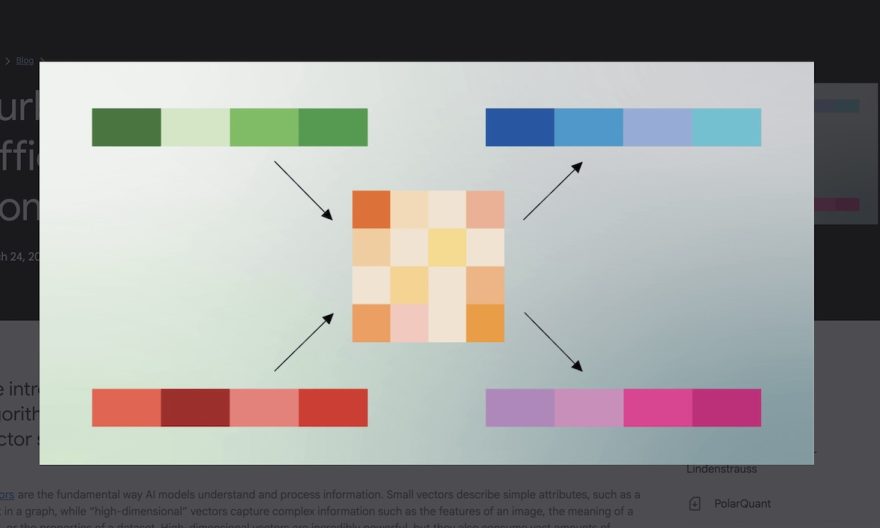

The key-value caching technique used by TurboQuant allows essential information from previous calculations to be retained, significantly speeding up the text generation process by AI models. This approach could offer significant efficiency gains compared to current methods.

Impact on the tech industry

The announcement of TurboQuant had immediate repercussions on the market, notably causing a drop in the shares of memory and storage manufacturers. Although this innovation does not solve the memory chip shortage, it reduces the pressure on the resources needed for AI model inference.

Matthew Prince, CEO of Cloudflare, compared TurboQuant to previous innovations like DeepSeek, highlighting its potential to transform the tech industry.

Outlook for the future

For now, TurboQuant remains in the research phase, and Google plans to share more information at the ICLR 2026 conference. If the promises of this algorithm materialize, it could not only transform artificial intelligence but also optimize other Google services, including its search engine.

The challenges of the memory chip shortage

Although TurboQuant may ease the memory demand for inference, training AI models still requires a huge amount of HBM memory chips. The global shortage of these components remains a major challenge for the industry, affecting the costs and availability of electronic devices. Companies must continue to seek innovative solutions to overcome these obstacles while developing more efficient and sustainable technologies.

Source: